iOS dev: How to get your code coverage right?

When I decided to tackle my preceding blog article on quality metrics for iOS, I wasn't prepared to spend that much time to get something robust and correct.

The part on which I stumbled most was the code coverage, not because it's that difficult to make it work (there is plenty of resources on the Web) but because in all articles I have seen the solution was working but was not reporting accurate and useful metrics (I am sure I have missed some, sorry for this if this is the case).

Note: the fact that Xcode support for it is very fragile and has changed with almost each version of Xcode did not ease this and explain why people were first focused on making it work.

Here are some of the pitfalls I saw in all the articles talking about code coverage:

- Pitfall #1: only the files under test are reported in the code coverage report. It means you do know the coverage on what you did test but not on what you have not tested. This is the biggest pitfall according to me.

- Pitfall #2: third-parties libraries and test files are impacting the coverage figures

- Pitfall #3: the report is not structured so difficult to analyze and make it actionable

- Pitfall #4: no article makes the difference between GHUnit and OCUnit, even if there are some indeed

The setup I proposed in the preceding article is still valid and will avoid you these pitfalls. As the article was already long enough, I decided not to make it longer and keep all the detailed explanations for a new article. Here it is.

Let's see how to tackle these issues one by one. If you tried to setup the code coverage using my last article, it will make all the steps a bit more logic.

Pitfall #1: Cover it all!

The two most popular blog posts on how to get code coverage for the iPhone can be found here and here. Follow them and you will only get the coverage for the files that are actually tested by unit tests.

OK you might know that all other classes are not tested, but how much does it weight? And does other people in your team know? What if your "standard" says that this part of the application should be covered (for example an utility class)?

Why does this happens? This is because the recommended approach is to enable the build settings 'Generate Test Coverage Files' and 'Instrument Program Flow' only on the test target, which means that only the tested files and the tests themselves will have .gcno files (code to cover) and .gcda files (code covered) generated during compilation (for .gcno files) and execution (for .gcda files).

This is why in my setup I also enable the two build settings 'Generate Test Coverage Files' and 'Instrument Program Flow' on the main target. Notice that I only enable them in Debug to avoid having issues with production code.

Is that enough? No, the second difference is to make GCOVR operate on the main bundle and not the test bundle. This is because the test bundle only contains the .gcno/.gcda files of the tests while the main bundle contains both. This is what the --object-directory build/YOUR-PROJECT-NAME.build/Debug-iphonesimulator/YOUR-MAIN-TARGET-NAME.build/Objects-normal/i386 option in GCOVR command line is doing.

Note: this is only valid for OCUnit. There are other differences for GHUnit but I cover them later (Pitfall #4)

You can check that everything is working locally first by going to ~/Library/Developer/Xcode/DerivedData/YOUR-PROJECT-NAME/Build/Intermediates/YOUR-MAIN-TARGET-NAME.build/Objects-normal/i386'. It should contain (once you have run your tests) a lot of .gcno and .gcda files. Check that there is a .gcno but no .gcda file for files you know not being tested.

Once done, you will see that your coverage metric in Jenkins will decrease a lot and will show you what your coverage really is!

Pitfall #2: It's not mine!

Now that you cover all your code, you will get a new problem: it will also report coverage for code that is not yours! That's not desirable because this is the kind of noise that make a metric false and quickly abandoned.

This is resolved in two steps:

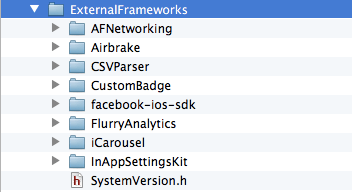

- isolate your third-parties libraries in a folder (this is common sense even if you do not want to compute code coverage). Notice it should be a real folder and not a Xcode logical one. I have put all mine in an ExternalFrameworks directory:

- add a

--exclude '.*ExternalFrameworks.*'flag to the GCOVR command line

Note: you should also exclude your unit tests themselves with --exclude '.*Tests.*' but this part was often already covered in the mentioned articles

Once done, your coverage metric in Jenkins should increase significantly as I haven't seen much tests in the iOS frameworks I am using!

Pitfall #3: Make it actionable!

The latest pitfall I have seen is that all these reports are flat and give you only a project and a file-by-file view of the coverage. No intermediary view. Very often we structure your application in layers and Apple MVC pattern encourages you to do so. Wouldn't it be great to know the coverage of the different layers? This is particularly true because you don't test all the layers with the same kind of tests and you don't put the same test efforts on the different layers.

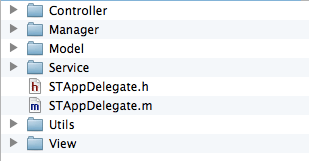

My best practice is to structure your project in directories that are both meaningful for day-to-day work and for the code coverage analysis.

This is an example of a typical structure I use:

Notice that you should use real folders (not Xcode logical ones) if you want this to have an impact on Cobertura report.

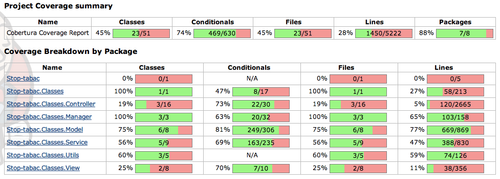

This is how I get the following report already shown in the preceding post:

In this way I can quickly check that sensitive application layers like domain (Model directory here), services and utilities (Utils here) are correctly tested.

As a rule of thumb, here is what I am targeting today in my applications for unit tests code coverage:

- 100% code coverage on Model, Manager, Utils. Build should fail under 80%. These layers are normaly 100% testable without much mocking effort.

- 80% code coverage on Service. Build should fail under 50%. This is because a few services might require much mocking effort.

- 0% code coverage on Controllers, Views. Build should not fail because of it.

Unfortunately, it's not possible yet to specify specific thresholds by package in Cobertura Jenkins plugin (vote for the open issue in JIRA here!). Someone has posted a solution for this on stackoverflow.com, but I have not tested it yet.

Of course once you start adding UI tests, the coverage of the Controllers/Views should increase significantly. I have not tried yet to include these tests in the coverage report, but I hope to be able soon.

Once done, it should become clear which part of the application you should test next.

Pitfall #4: Of course xxUnit is the best tool!

Last, but not least: we all have our preferred tools to work. There are strong advisers of OCUnit or GHUnit, and very often not both are covered when it deals to getting test results or coverage.

I won't go back to the explanations already given in my preceding article, but will focus on what is structurally different between the two:

- GHUnit is not integrated in Xcode: That's why a build setting like ‘Test after build’ is not useful and this is also why you have to specify ‘Application does not run in background’ for GHUnit in order to have the application quit at the end of the tests (and write the test reports).

- GHUnit builds a completely new application: That's why it has its own bundle and its own AppDelegate. This is also why you have to put fopen$UNIX2003 and fwrite$UNIX2003 in a different place. And most importantly, that's why you need to copy the .gcno generated for your application code in your test bundle, otherwise you will not get the coverage for files that are not tested (you go back to Pitfall #1 then). This is what the:

cp -n build/YOUR-PROJECT-NAME.build/Debug-iphonesimulator/YOUR-MAIN-TARGET-NAME.build/Objects-normal/i386/*.gcno build/YOUR-PROJECT-NAME.build/Debug-iphonesimulator/YOUR-TEST-TARGET-NAME.build/Objects-normal/i386 || trueis made for and it is important to do this before the GCOVR command line for it to be useful.

Conclusion

The unintelligible steps detailed in the preceding article should become logic now, do they?

I hope that this will help you make the most of your improved or new coverage metrics!