Data Grid or NoSQL ? same, same but different…

For three years now, NoSQL as a piece of technologies for Big Data has spread over the world and is challenging the centralized world of RDBMS. The space of distributed storages is yet not new and banks, online gaming platforms are using for several years technologies called "data grid" to address latencies and throughput issues. And to be completely franc, "Big Data" is not far from being the "new SOA”: a radical paradigm shift lost in the middle of commercial buzz words but that's another story...

What are the common points? The main differences?

In the rest of the article, the term nosql will be used to talk about "transaction oriented" solutions like Cassandra, Riak, Voldemort, DynamoDB. On the other side, “Data Grid” will group solutions like Gigaspaces, Gemfire/SQLFire, Oracle Coherence.

Both Data Grids and NoSQL are talking about distributed storage

No surprise...this is a distributed vision of the "database" and of the storage which enable to support more throughputs, more volume under, generally, cost constraints. The main idea resides in the fact that instead of using high-end server to store and query data, you use several "low-end" servers to store more data, increase the throughput and being able to scale-out, address elasticity issues. Indeed, this article reminds us the unit cost of a transaction is approx.. 4 times cheaper on a cluster of low-end server than on a high end server, (the main drawback of distributed storage will be the bandwidth consumption that stays expensive).

So in both cases, the distributed vision of the storage implies:

- Partitioning the data based on a key. Then the key is associated to a server (or a specific buckets served by a server).

- Routing the queries to the server that holds the data...The main idea is to avoid asking to all the servers for a specific piece of data. So the storage system needs to route the query to the right server. There are different implementations of that routing. Solution like Cassandra will implement a server side routing, implying at least and almost systematically one network hop (except if you are lucky and hit the right server). Solution like Voldemort will use a client-side routing. "Data Grid" like Gemfire will also use a client-side routing, will learn from the cluster where the data is located (and thus avoid the network hops)

- All these distributed systems use replication mechanisms to ensure the availability and, in some cases, the durability (by mitigating the probability of losing a server before the data be replicated or by limiting the data corruption)

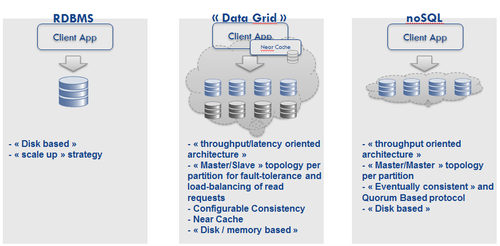

Data Grid and NoSQL comes from two distinct worlds: the latency oriented architecture or throughput architecture

I was reading again this article of Vogels. As he stated (talking about GPU and CPU):

"… the most fundamental abstraction trade-off has always been latency versus throughput. These trade-offs have even impacted the way the lowest level building blocks in our computer architectures have been designed. Modern CPUs strongly favor lower latency of operations with clock cycles in the nanoseconds "

Even if it is quite difficult, should I say dangerous, to compare things, we can see the same trade-offs between NoSQL and data grid solutions. Data Grids come from a world where each milli-seconds (and now nano-seconds) count. These "data grids" have thus rapidly quit the disk and use the memory as main storage (even if it is configurable and we will discuss about that later).

On the other side, NoSQL solutions have been developed mainly for web-scale industries where the latency is not less important but let say around the second (because the end user is a human). In that case, you do not need to answer quicker and quicker, but you need to serve more and more requests.

NoSQL solutions have been developed to answer specific needs…whereas Data Grids are much more configurable: Bridging the gap

If you look at the history of these solutions you will, at 10 000 foot high view, see that NoSQL solutions are mainly clones of the Dynamo model that have been developed at Amazon to store session and virtual caddies.

The choices that were made fitted the Amazon.com needs : response time predictability, infrastructure elasticity and scaling out, multi-datacenter resiliency, design for failure...

"Data Grids" come from more heterogeneous (and thus richer) environments. You need to use them to relieve the RDBMS, to keep data in your local JVM, to scale...You need to integrate them with the historical part of your Information System, so you need SQL-like integration, you need java, .Net, ruby client APIs...

In short, data grids are clearly more configurable and so more adaptable than the NoSQL solutions. Without being exhaustive, we can think to:

- "Data Grids" enable you controlling the way data is stored. They all have default implementation (Gigaspaces offers RDBMS by default, Gemfire offers file and disk based storage by default....) but in all cases, you can choose the one that fits your needs: do you need to store data, do you need to relieve the existing databases....

- In order to minimize the latency, data grids enable you to store data synchronously (write-through) or asynchronously (write-behind) on disk. You can also define overflow strategies. In that case, data is store in memory up to a treshold where data is flushed on disk (following algorithms like LRU ...). NoSQL solutions have not been designed to provide these features.

- Data grids enable you developing Event Driven Architecture. In some cases, an often-seen architecture could be a pure RDBMS plus a Message Oriented Middleware that is responsible to propagate the modifications (via events). Data Grids provide notification mechanisms for all or specified keys. Clients can so be notified on create / update / delete operations (like triggers)

- Querying is maybe the point on which pure NoSQL solutions and data grids are merging. Basically, both solutions provides pure map API which means you can put, get or delete an object by the key. Data grids already provide SQL queries (with limitations on joins) for a while but looking at the NoSQL market, you will see that these NoSQL solutions start providing secondary indexes that enable SQL-like queries, or MapReduce-like queries (with Brisk or DynamoDB that provides integration with Hadoop or Elastic MapReduce).

- Data grids enable near-cache topologies. The classical way of scaling readings with a RDBMS solution is to add a cache layer on top of the RDBMS (typically a memcached with difficulties like cache synchronization, cache partitioning to avoid collapsing the RDBMS in case of failure..). We do it so we know it works but data grids facilitates the deployment of "near cache topologies" - which means that data can be cached on the client side - ensuring the auto-eviction when the data is updated in the central storage, accelerating the readings...

Both systems have the same constraints especially when it comes to ACID

The funny thing is that both systems have the same constraints especially when it comes to ACID.

In fact, both solutions enables you to play with consistency and/or availability either using quorum based protocol or synchronous / asynchronous replication mechanisms. There is yet a subtility that can help data grids manage consistency (or at least conflict resolution): "data grid" use a master/slave topology per partition whereas nosql solutions use a master/master topology per partition.

Moreover, the "data grids" can offer you Atomicity and Transactions management BUT under certain constraints: typically, you will have to override the partitioning mechanism and ensure data colocation, you will not be able to rebalance the cluster while transactions are pending...These features come at a cost on the operability of the solution. This may not be blocking depending on the use cases but NoSQL solutions like Dynamo have made their choices: operability, elasticity with impacts on the dev side.

NoSQL offer a storage vision whereas data grids offer more cases

On that side, the match is not that simple. Data Grids offer much more complicated deployment options. You can use them in a classical client / server architecture: the data is stored on dedicated JVM whereas the business processes have their own dedicated JVM. In that case, the data grid is seen as a pure storage layer. That case is finally quite close of the NoSQL solutions.

What data grids offer (and not NoSQL solutions) is a peer-to-peer deployment where business processes and data are deployed in the same JVM. What changes is that the unit of deployment is the sum of the business process and you data. From the distributed storage point of view, there are no real advantages if you use your data grid as storage: data will be partitioned across the cluster, based on the key. This is yet quite different if your business cases necessitate writing and reading a lot of local data that is specific to your business case and must not be shared with the other business processes. In that case, you will benefit from this kind of deployment (gains in latency and bandwidth consumption).

The main drawback of data grids is that they need to know the java object (typically in the classpath) and in the best case, you can choose between default java serialization (Serializable or Externalizable) or the specific serialization. That can complicate upgrading the model, adding indexes...without downtime... On the opposite (and it solves the previous issues), NoSQL solutions work with a byte array: object versioning, serialization and unserialization must so be "manually" managed (even if these solutions often use protocols like Thrift, Avro…).

So what? what to conclude?

I am sure I forgot some points but in short that both systems are under the same constraints. The clear points is that "data grids", due to their own story are certainly more adaptable. That does not mean you must not look at NoSQL solutions because they can fit your requirements and these solutions work (see the works of Netflix with Cassandra) More generally, sometimes I am asking myself: why will we move to distributed storage (if we move to)? May be the following elements:

- the variability of workload and the needed elasticity

- (maybe) the cost of this kind of infrastructure compared to traditional RDBMS infrastructure. I said maybe because I am convinced the economics equation must be explicitly posed.

- the resiliency of the systems (and the implied cost pressure) : we now need to address higher level of failure for cheaper (because the cost of mitigate the failure must not be higher than the cost of failure itself)

- the trivialization of infrastructure which will imply having less server diversity to serve more and more different use cases

- the platform vision that will push forward to develop multi-tenant architecture